I remember the day I discovered that Siri had a nickname option. “This is the most amazing technology I’ve ever seen!” I thought, before putting my phone through its paces with a series of (very cool) requests:

“Call me ‘Buddy’.”

OK, Buddy

“No, wait. Call me ‘Handsome’.”

OK, Handsome.

“Actually, call me ‘Your Excellence’.’“

Of course, it wasn’t just the nickname itself that captured my interest. It was the fact that I could use my assistant to change my assistant, a level of interaction that felt much closer to the science-fiction fantasy of comprehensive AI.

But five years later, the nickname feature seems more like an Easter egg than a typical virtual assistant interaction. In order to make adjustments to most consumer VAs (when such adjustments are available at all), you usually have to go through your device’s settings page, rather than through the VA itself.

Conversational interfaces — not just VAs, but chatbots too — are built to be intuitive and unobtrusive. You’ve heard the selling points: Use your natural language! Get more done without switching apps! Yet, for now, those promises are limited when it comes to customizing VAs to your unique needs.

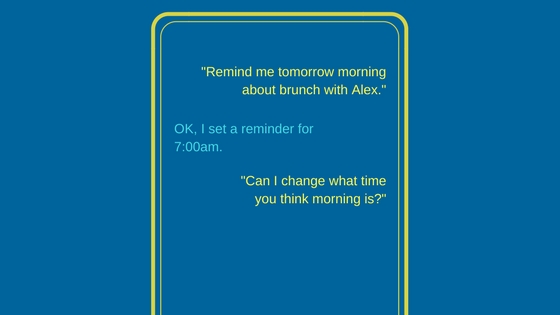

So the question stands: if you ask your VA to change, will it listen?

What We Have Now

Beyond the nickname designation, there are a handful of ways you can currently adjust your VA experience through the VA itself.

Context for contacts: VAs are typically designed to interact with your contact lists. Through voice commands, you can build yourself a more natural experience by assigning contextual information to individual contacts. For example, I can give relationship information like, “Remember that [contact’s name] is my brother,” and the VA will save this information to inform future commands. It’s a relatively basic function, but one that hints at future user-controlled rule creation.

Enable/Disable functionality: As more and more apps are being built to interact with VAs, being able to activate or deactivate these different functions are a straightforward but necessary feature, especially when two or more apps may utilize similar voice commands. Being able to adjust these settings from within a VA makes this a much more nimble process.

Internet-of-Things (IoT) integration: VAs like Alexa or Google Assistant can work through an automation hub to control different connected devices throughout your home. The VA can be used to discover, connect to, and operate these devices, through device-specific settings usually involve manual operation outside of the VA interface.

What We’re Still Missing

In the pursuit of a more organic, comprehensive VA experience, there are several improvement areas where consumers could benefit from increased functionality. To be clear, we’re talking here about user-controlled changes to the VA itself, as opposed to general suggestions about getting the VA to “do more stuff”.

VA control of VA settings: Many assistants allow you to select voice presentation (gender, accent, etc.), assign a personalized wake word, and adjust other access conditions. However, these options are usually not available through the VA interface, but through a separate settings screen.

Chain commands: More and more connected devices are being built with VA functionality; if you have the necessary products, you can change the temperature in your home, adjust the lighting, play music, turn down your crockpot, and a lot more. For now, each of these operations requires a separate voice command, but what if you could link several commands together into one comprehensive action? What if you could tell Alexa to “start movie night’ by dimming the living room lights, turning the television on, activating Netflix, and setting your phone to silent? The ability to chain separate commands together, and to do so through your VA, represents a “nice-to-have” opportunity to promote more comfortable, sophisticated usage.

Personal vocabulary rules: Perhaps the most-needed VA controls have to do with the way your own, unique speaking style is understood. Sure, you can tell Siri that your uncle’s name is Bartholomew, but why stop there? “Siri, remember that ‘Snickers’ is my welsh corgi.” “Alexa, when I say ‘my team’, I’m talking about the Chicago Blackhawks.” Teaching your VA what you mean by certain words and phrases could make associated functions a lot more personal and natural to you. Plus, you might spend a little less time having to clarify your requests.

How Classification Can Help

Classification like eContext’s isn’t just about understanding the topic of your text or speech. It’s about identifying user intent. For example, by gathering data on on thousands of different user commands, classifying them to eContext categories, and tracking the apps that were used immediately following the command, developers can gain a clearer sense of what users are trying to accomplish.

This applies not just to the apps and devices that VAs can interact with, but to the VA itself. What kind of language do consumers use when they want to adjust how a VA operates? What about when they want a tutorial on a given function, or when they want to send a customer support message? With a robust understanding of human language, and the flexibility to let users decide for themselves what their own words mean, tech companies can help integrate VAs even more seamlessly into our day to day lives.

___

Want to learn more about how to improve VAs? Download our Whitepaper, Enhancing Virtual Assistants with Structured Knowledge.